Domino Query Language

The Domino Query Language documentation for Domino 11 will be available as part of the Domino Designer 11.0 Basic User Guide and Reference. For more information, see the Domino Designer documentation at https://help.hcltechsw.com/dom_designer/designer_welcome.html

Domino Query Language (DQL) is a facility running in Domino core allowing for a terse shorthand syntax for finding documents according to a wide variety of complexity of terms. It leverages existing design elements without the need to write detailed code to access them.

Getting Started

DQL processing uses extracted, optimized design data to make intelligent choices about query plans and to work with some of its syntactic elements like view names. To do that, you first need to create and keep up to date that data in a catalog kept in your data directory, named GQFdsgn.cat.

To create GQFdsgn.cat you run updall with the name of your database with the

-e option, so

load updall myappdb.nsf -e

The first time that is issued, GQFdsgn.cat will be created with a single database cataloged within it. But you must add each database you are going to query using DQL to the catalog in the same manner. It is not recommended that you add all databases to the design catalog; that is a large superset of the extracted data you will need.

For more on this subject, see The Design Catalog below.

DQL Syntax

DQL is can be executed in the Lotusscript and Java Query method on the (Notes)Database object or via the domino-db node.js API.

Using [ and ] to denote option tokens, the syntax of DQL:

Term Boolean Term [ Boolean Term ...]

where Term is

[ Identifier ] Operator [ Value ]

Boolean is

and | or | and not | or not

Identifier is

SummaryField | ‘View or folder name’.Columnname | @function

Operator is

= | > | >= | < | <= | in [all]

Value is

‘ Quoted string value ’ | @dt(‘ datetime value ’) | number | ( in value list )

SummaryField is the name of any field stored in the summary portion of any document in a Domino database.

View or folder name is the name, or an alias, of any view or folder created in a Domino database

Columnname is the programmatic name of any primary sorted column in the view or folder specified in View or folder name

@function is

@all | @ModifiedInThisFile |@DocumentUniqueID | @Created

Quoted string value is any textual value desired to be found in a field or view column specified

datetime value is an ISO8601-compliant value specified in GMT or Zulu time

number is a floating point number, with or without decimal point, including scientific notation

in value list is

‘ value 1’ [ ,’value2’ ... ]

Using the syntax

Typical simple query examples with explanations include:

order_no > 146751 and order_no <= 150111

“Find all documents with order numbers between 146751 and 150111, including the number 150111”

sales_person in (‘Chad Keighley’, ‘Jeff Chantel’, ‘Louis Cawlfield’, ‘Mariel Nathanson’)

“Find all documents that have any one of the four values specified in the sales person field”

date_origin > @dt(‘2018-10-10T10:00:00+0500’)

“Find all documents originating after 10 October 2018 at 10:00.00 EST”

‘Orders’.order_type = ‘Phone’

“Using the Orders view, find documents with a value of ‘Phone’ in the order_type column”

in (‘Orders’, ’Special orders folder 1’, ‘Old_orders 2’)

“Find documents in any of the following views and folders (names specified)”

Value data types

Since Domino features a typeless data model, where any type of data can be stored in any field, the data type of the search operation performed by each query term in DQL is defined by the type of the value specified. So

‘String values’

will always cause a textual match

@dt(‘2018-08-01T11:18:00.00+0500’)

will be satisfied by a datetime match and numbers without quotes like

128992E-2 or 1289

will perform a numeric match

Using booleans

Simple queries can be coupled via Boolean processing:

Order_origin in (‘London’, ‘LA’, ‘Tokyo’) AND date_origin > @dt(‘20160511’) or partno = 388388

“Find documents with (any of the order_origin field values of ‘London’, ‘LA’ or ‘Tokyo’ AND sales date greater than 11 May 11 2016) OR having Part number of 388388”

'Soon to be special’.Status = ‘Shipping’ and ( order_origin = ‘LA’ or sales_person in (‘Chad Keighley’, ‘Jeff Chantel’, ‘Louis Cawlfield’, ‘Mariel Nathanson’))**

“Find documents with values of ‘Shipping’ using the Status column in the ‘Soon to be special’ view AND with either an order_origin of ‘LA’ OR sold by any of Chad Keighley, Jeff Chantel, Louis Cawlfield or Mariel Nathanson”

‘Soon to be special’.Status = ‘Inventory’ and ( order_origin = ‘Detroit’ or NOT sales_person in (‘Harold Cunningham’, ‘Julie Leach’, ‘Gordon Smith’, ‘Terence Henry’))

“Find documents with values of ‘Inventory’ using the Status column in the ‘Soon to be special’ view AND with either an order_origin of ‘Detroit’ OR NOT sold by any of Harold Cunningham, Julie Leach, Gordon Smith of Terence Henry.”

Precedence

DQL has a “natural precedence” (order of processing) where ANDed terms are processed before ORed terms. So to reuse above example

partno = 388388 or Order_origin in (‘London’, ‘LA’, ‘Tokyo’) AND date_origin > @dt(‘20160511’)

The order_origin and date_origin results are to be ANDed together then that result ORed with the partno results.

“Natural precedence” can be overridden using parentheses:

Order_origin in (‘London’, ‘LA’, ‘Tokyo’) AND (date_origin > @dt(‘20160511’) or partno = 388388)

so that the date_origin and partno terms would be ORed then that result ANDed with the results of the order_origin term.

In all

The “all” keyword modifies the inclusive “in” operator, which matches any of the values in the parenthesized value list to be exclusive, matching all of the values in the list. So

Part_no in all ( 389, 27883, 388388, 587992 )

Will only find documents that contain all of the values 389, 27883, 388388 and 587992

Likewise

in all (‘Orders’, ’Special orders folder 1’, ‘Old_orders 2’)

will only find documents in each of the ‘Orders’, ’Special orders folder 1’ and ‘Old_orders 2’ folders or views, documents contained in them all.

Quoted string values

The single quote (') forces DQL processing to interpret arguments as text (versus date/times and numbers). Within a quoted value, a single quote can be specified by two adjacent single quotes, so

O'Malley's dog's collar

can be found using the quoted value:

O''Malley''s dog''s collar

All text terms are evaluated in using case and accent insensitivity.

Date and time values

DQL supports the RFC3339 standard format for datetime strings. Each of the following represent allowable date values:

@dt('2019-08-06T02:09:52Z')

@dt('2019-08-06T02:09:52.6800+04:00')

@dt('2019-08-06')

@dt('02:09:52')

@dt('02:09:52.6800')

@dt('02:09:52.6800+05:00')

Where all operators function against all date values. In addition, the @created

and @modifiedinthisfile function identifiers will utilize

@dt(‘

So, a partial date will match values according to the part specified, if a single day, that day and if a second (or subsecond) within any day, that second. Without timezone modifiers (+ or – hh:mm suffixes) all times are taken as UTC values (GMT). If you want to specify local time, you must use a timezone suffix modifier.

Restriction: Partial date values cannot be found using view searching. So

'myview'.datefield < @dt('2018-09-01')

'myview'.datefield = @dt('02:09:52')

will return an evaluation errors. To use view searches and partial date values where you want to find a date but the time is not important, specify the entire value:

'myview'datefield < @dt('2018-09-01T00:00:00.000Z')

You cannot use a view search to find a partial date value where the date is not important but you are searching for a time.

Note: Date\Time value stored\retrieved to\from domino database is in GMT format. It does not

store timezone information along with values. This will avoid issues related to saved timezone

and current timezone while returning the Date\Time values.

Substitution variables

Since it is useful to run DQL queries that vary only by the values in any given term, it is tempting to build them using string construction. For instance, in Java

String query = "order_no = " + order_num + " and order_origin = '" + order_origination + "'";

where order_num and order_origination are fields with values supplied from outside the code itself. When this technique is used to build queries, a security problem is introduced. Users or external malware can modify query syntax by inputting legal DQL that would change the results of the query to find many more documents, perhaps all documents in the database.

For instance, if order_num contained the value

"32890 or order_origin <= ''"

that part of the query would find every document in the database. This is the infamous SQL Injection exposure.

To eliminate this weakness, DQL supports substitution variables in its syntax so a query can remain unchanged but differing values can be supplied to produce the variety of results desired. There are two types of substitution variables, positional and named.

For positional substitution variables, question marks are supplied in the query:

String query = "order_no = ? and order_origin = ?";

and values are supplied via 1-based assignment, where the order_no value is variable 1 and order_origin is variable 2.

For named substitution variables, question marks followed by alphanumeric and special character text names of 1-15 bytes comprise the name. Here is the same query with named substitution variables:

String query = "order_no = ?order_num and order_origin = ?order_origin";

and values are supplied using the text following the question mark only.

See the API documentation for details on how to set these variable values in the language you are using.

For 10.0.1, the Domino Java and Lotusscript classes only support named substitution variables.

Limits

Even though the DQL processing engine takes measures to avoid dominating system resources, it is still possible to write and execute a runaway query. To prevent that, three limit settings are provided. If any one of this is exceeded, query processing will end, and an error code and message will be returned.

The limits are:

- MaxDocsScanned – maximum allowable NSF documents scanned ( 200000 default)

- MaxEntriesScanned – maximum allowable index entries scanned (200000 default)

- MaxMsecs – maximum time consumed in milliseconds ( 120000 ) (2 minutes)

These values can be overridden using the following notes.ini settings (or using the “set configuration” console command) system wide:

QUERY_MAX_DOCS_SCANNED = <system-wide maximum allowable NSF document scanned>

QUERY_MAX_VIEW_ENTRIES_SCANNED = <system-wide maximum allowable view entries scanned>

QUERY_MAX_MSECS_TOTAL = <system-wide maximum query time consumed in milliseconds>

In addition to notes.ini, these limit settings can be overridden using any of the API interfaces to the DQL engine.

Performance/System Resource Utilization

Of course, DQL processing consumes memory, disk and CPU. In the interest of preserving those resources, as well as speeding up the time to query results, it is important to optimize your queries as much as possible.

At present there are two ways to service individual query terms in DQL – NSF data scans and view entry scans. In general, view entries are more efficient to use, though that can vary by view construction and size of results. Equality searches that find a small or medium number of documents are particularly faster using views. So, it becomes important to know how the DQL engine optimizes access to use views.

DQL processing will function very well in the mix of operational traffic on a Domino server but it is not free in that aspect either. Read-only view access will increase, as will NSF summary data access. That processing is nothing new to the server, but its effect should be studied to avoid any regression in related processing.

To use the index of a view or folder, that index must have some special properties.

Identifying view columns used by DQL Processing

DQL processing uses primary collated columns for view access. That means the view columns must conform to the following collation rules :

- A collated column is either the leftmost column in the view with Sort order of Ascending checked in the view or

- A column with

Click on column to sort Ascendingchecked in the Designer View Column collation tab.

Visually in the Notes© client, when a view is open, the columns with the

Click on column to sort Ascending have carets appear when any user does

mouseover of the column name:

In addition to basic index existence, the following attributes must be true for a view column to be used by DQL processing:

- View must have only Select @All as its selection criteria

- There must be a collated column with ONLY the field name as its formula

- The view column must be non-categorized and marked to “Show multiple values as separate entries”

These additional 3 criteria are necessary for a field name to be processed using

a view using <SummaryField> <Operator> <Value> syntax. It is still the case

that if a view index cannot be found for a <SummaryField> <Operator> <Value>

term, NSF scanning will be used to satisfy it.

If the <'View or folder name'>.<Columnname> syntax is used, only the initial

above bulleted collation rules must apply. If a

<'View or folder name'>.<Columnname> term is part of a query and the

collation rules are not true for that column, the query will fail with a

return code.

Note -

<'View or folder name'>.<Columnname>

terms apply two levels of filtering for the result set of documents, the

view selection criteria and the query term itself

The Design Catalog

For high speed access to internal information about views and view columns, DQL processing uses design data extracted from view notes. Currently this information will be stored in a new database, named GQFdsgn.cat. It is created using new updall flags. It does not replicate and is solely used as a fast-path tool to access design data at runtime. Further, its design and data within it are proprietary and volatile so access and updating by any other entity than the local Domino server is not supported.

Once a single database (that is any database) has been catalogued, DQL queries

will operate without a Design Catalog. Only the

<'View or folder name'>.<Columnname> syntax will fail. All other syntax will

function but there will be no view access and all terms will be satisfied

utilizing NSF scanning.

However, DQL processing is greatly enhanced by having design data in the catalog.

To create GQFdsgn.cat and update its data, the updall -e and -d options are used. From the Domino console:

load updall <database path> -e

load updall <database path> -d

where -e will add a database to the design catalog and -d will update the design

data stored for a database. If <database path> is omitted, all databases under

the data directory will be added to the design catalog. Though it will function,

it is not recommended as it is a rather large superset of what applications need

to run DQL.

There is no automatic invocation of -e or -d updall options. If you change your view design or add a view, you must run updall -d for the changes to be known by DQL. If GQFdsgn.cat becomes corrupt, updall -e will create it afresh.

DomQuery

The DomQuery command line utility is shipped with the Domino server and is useful to compose and tune queries. Its EXPLAIN output is instrumental in understanding and optimizing DQL syntax and how queries are processed.

Its options are given below:

-f [DBName] data directory relative path, REQUIRED

-q [double quoted string query] query string - either this or -z file required

-z [QueryFile path] full path to a file containing query syntax queries delimited by #* at preceding line begin

-e Explain the nodes

-p Parse only (for testing)

-v [MaxEntries] Maximum view entries to be scanned

-c [MaxDocsScanned] Maximum number of documents to be scanned

-m [Msecs] Maximum milliseconds to execute

-x Exit on error (-z file case)

-j No view processing performed (only NSF document scan and FT)

-o [Output Report File path] full path to a file to which output will be written

DomQuery neither fetches from nor makes updates to data in NSF files. It is solely useful to author and optimize queries.

In Unix (Linux and AIX), DomQuery is lower case - domquery.

EXPLAIN

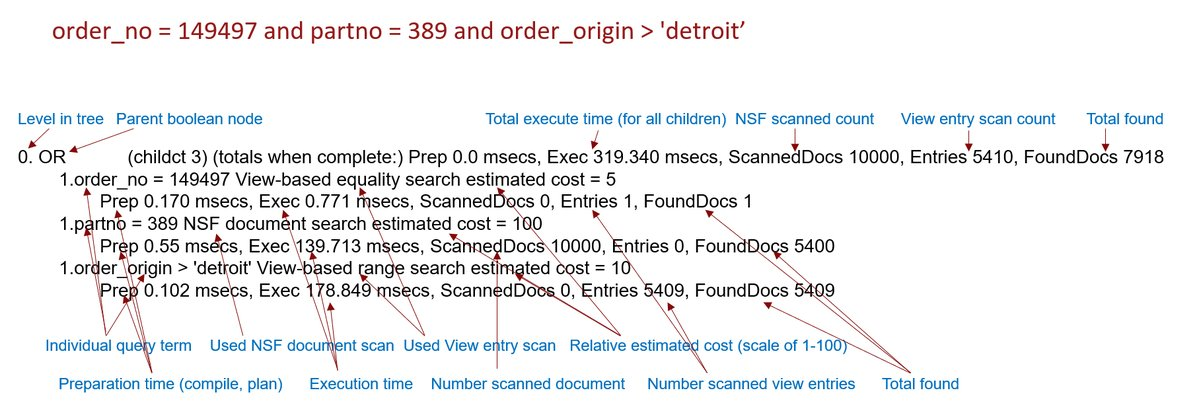

The best way to understand how DQL syntax is going to be processed is by using EXPLAIN output. For the query:

order_no = 149497 or partno = 389 or order_origin > 'detroit’

the following is a graphical description of EXPLAIN output:

where DQL processing treats all “sibling” OR Boolean operators as a single parent node and the individual terms its children. Each term is serviced either by view processing or NSF scan operation. The number of Entries (views) or ScannedDocs (NSF scans) indicate the relative work done to satisfy the term. There are two millisecond timings, Prep and Exec, where Prep is the time it took to compile, validate and prepare the term and Exec the time to execute it. The FoundDocs value shows the total documents that passed the term’s conditions.

All counts and timings are rolled up into the parent node where the total cost of the query is displayed.

Of course, EXPLAIN output can get much more complicated when queries are more complicated. But the same tuning practices will be effective in optimizing performance:

- Locate the most expensive terms using both timings and counts.

- For field terms, consider the overall usage of the field being used. If it commonly used in queries PLUS it is expensive the consider creating a view column with attributes specified above so the term can be optimized.

- For view column terms, consider the width (how many columns) and the size of the view. If you can use a view with fewer columns and/or one with more restrictive selection criteria, consider that course of action.

In general, keeping even intermediate results as small as possible is a win. In that regard, ANDing terms together shrinks results while ORing terms together grows them. Of course, the application logic has its requirements, but the phenomena should also be considered.